Protecting Minors on Social Media: Why Age Verification Matters in 2026

The risk landscape for minors has intensified

Social media has become close to ambient infrastructure for young people: always on, always nearby, and deeply intertwined with identity, friendship, learning, and entertainment. That ubiquity is exactly why child safety has moved from a “nice-to-have” policy area to a core operational capability for platforms. As kids increasingly engage with these platforms, their exposure to online risks—including harmful content and predatory behavior—has become a central concern.

In the United States, the U.S. Surgeon General’s advisory on social media and youth mental health describes youth social media use as nearly universal (with majorities reporting very frequent use) while also stressing that there is not yet enough evidence to conclude social media is sufficiently safe for children and adolescents. The same advisory also notes a persistent reality that many platforms set a minimum age (often 13), yet substantial numbers of younger children still use social media anyway—an early signal that policy-only age rules rarely hold in the real world.

From a Trust & Safety standpoint, the risk is not one thing. It’s a cluster of content risks, contact risks, and conduct risks that interact with each other—and with product design.

Cyberbullying and harassment are still routine experiences for many teens. In a large survey, Pew Research Center reported that teen girls were more likely than teen boys to experience multiple types of online harassment, and older teens were more likely than younger teens to report multiple forms of cyberbullying. The pain here is not abstract. It can be cumulative, socially contagious, and hard to escape when your phone is your primary social venue, especially those who are LGBTQ+ youth or minors lacking family support, as they face heightened vulnerability to online abuse.

Social platforms also expose minors to higher-stakes exploitation dynamics. UNICEF describes online sexual exploitation and abuse as a major threat to children and emphasizes that AI is changing how children are exposed to it. On the reporting side, National Center for Missing & Exploited Children shows how vast the scale of suspected child sexual exploitation reporting has become: its CyberTipline data indicates 20.5 million reports in 2024, down from 36.2 million in 2023—still an enormous volume that underscores why “we’ll just moderate harder” is rarely a credible standalone strategy. Different countries have varying levels of risk and regulatory responses to these online threats, with some implementing stricter controls and others facing greater challenges in enforcement.

In United Kingdom and beyond, the Internet Watch Foundation likewise documents the scale of abuse imagery its analysts confirm online—data that points to both the magnitude of the problem and the reality that abuse quickly re-uploads, migrates, and reappears across services.

Then there’s the newer frontier: synthetic media. In early 2026 reporting, Reuters summarized a surge in AI-generated child sexual abuse material and highlighted how prosecutors and regulators are increasingly scrutinizing the role of algorithmic amplification in spreading illegal content, including content involving minors. Even if your platform does not “allow” that content, any recommender system that accelerates reach can become part of the harm pathway.

Finally, youth safety is also a mental health question. World Health Organization reporting in Europe points to rising “problematic social media use” among adolescents (7% in 2018 to 11% in 2022), reinforcing why regulators increasingly talk about design features—not just content moderation. And because self-harm and suicide are high-stakes public health concerns for adolescents, efforts to limit exposure to harmful content—and to reduce algorithmic “rabbit holes”—have become part of mainstream policy expectations.

The uncomfortable conclusion is also the practical one: at social-media scale, manual review alone cannot reliably prevent underage access or consistently shield minors from downstream harms. Evidence and enforcement trends now treat prevention as a distinct layer of safety—not merely a subset of moderation.

Regulation is converging on proactive age assurance

By 2026, the most important shift is not “there are more rules.” It is that many regulatory regimes now expect platforms to make risk-based, proactive choices—grounded in reasonable age assurance—rather than leaning on symbolic consent screens.

In the European Union, the European Commission published detailed guidelines on the protection of minors under the Digital Services Act in July 2025. The guidelines recommend concrete product measures such as setting minors’ accounts to private by default, adjusting recommender systems to reduce “rabbit holes,” strengthening blocking/muting controls, restricting the ability to distribute minors’ content, and disabling by default certain high-engagement features (for example, streaks, autoplay, and push notifications). Crucially for age assurance, they also recommend effective age assurance methods that are accurate, reliable, robust, non-intrusive, and non-discriminatory—pairing age verification for higher-risk contexts (like adult content) with age estimation for other contexts where a lower age threshold or different risk profile applies.

The EU has also moved toward standard-setting, not just enforcement. The Commission’s “EU approach to age verification” describes a blueprint (released July 2025, updated with a second blueprint in October 2025) designed to let users prove they are over 18 without sharing other personal information, emphasizing privacy-preserving implementation and interoperability with future EU digital identity infrastructure.

In the UK, Ofcom has framed robust age checks as a cornerstone of its online safety regime. Its guidance and public communications emphasize that age assurance must be “highly effective,” and it explicitly rejects self-declaration as a “highly effective” method when services need strong age protections. That position is increasingly backed not just by guidance, but by real enforcement. On February 23, 2026, Reuters reported an Ofcom fine of £1.35 million against an adult content company for failing to implement adequate age checks—an illustration that regulators are now willing to impose meaningful penalties when companies treat age assurance as optional or fail to implement proper age verification processes.

Australia has gone further in one respect: it has adopted a “minimum age” account-holding obligation for certain social media services. The Office of the Australian Information Commissioner states that from 10 December 2025, age-restricted social media platforms must take “reasonable steps” to prevent Australians under 16 from creating or keeping an account, and that the framework incorporates privacy protections for age assurance and for third parties involved in age assurance. The eSafety Commissioner similarly describes the obligation, the policy intent (reducing exposure to risks while logged in), and potential penalties that can reach tens of millions of AUD for corporations that fail to take reasonable steps.

In the U.S., the regulatory picture is more fragmented. Federally, child privacy and consent obligations continue to evolve: the Federal Trade Commission finalized COPPA Rule amendments, with a compliance date that—per the Federal Register—gives most regulated entities until April 22, 2026 to comply (with some exceptions for specified provisions). At the same time, many state-level efforts to mandate social-media age verification have run into constitutional challenges, demonstrating that “age assurance” is not only a technical problem, but also a governance, proportionality, and civil liberties problem that platforms must navigate carefully.

What emerges from these different regimes is a shared direction of travel:

- Age assurance is treated as a preventive control, not merely a “policy requirement.”

- “Reasonable steps” and “risk-based measures” matter more than checkbox compliance.

- Privacy expectations are rising alongside safety expectations; regulators are increasingly explicit that age assurance must respect data protection principles and fundamental rights.

- Id verification is becoming a key component of compliance, as regulatory regimes increasingly require companies to implement robust methods to verify user identities and ages.

Why self-declared ages and basic age gates no longer protect

The “enter your birthday” age gate had a long run. It was quick, cheap, and easy to ship.

In 2026, it is also widely understood—by regulators, by parents, and frankly by teenagers—to be trivial to bypass. That makes it a weak safety measure and, increasingly, a weak compliance story.

Regulators are becoming unusually direct here. Ofcom’s published guidance on age checks lists methods it considers capable of being “highly effective” (including approaches such as photo ID matching and facial age estimation) and explicitly states that self-declaration of age is not “highly effective” because it cannot reliably verify a person's age or user's age.

The UK Information Commissioner’s Office is similarly clear that services should avoid relying solely on self-declaration if there may be risk to the child, and that any use of self-declaration must be proportionate to the risks and supported by evidence of robustness and effectiveness.

The EU’s July 2025 guidelines reinforce the same core theme: platforms should deploy effective age assurance methods, and those methods must be appropriate to the risks—with stronger measures for higher-risk contexts and lower-friction approaches where proportionate.

Operationally, basic age gates also create a false sense of safety. If harmful features, content, or contact pathways remain accessible to underage users who self-declare as adults, a platform may still face the same issues every single time this method is used:

- child safety incidents that trigger investigations, media scrutiny, and brand damage, even if the platform can point to an age gate;

- claims that the platform did not take “reasonable steps” or “appropriate and proportionate measures” to prevent underage access, depending on jurisdiction;

- internal Trust & Safety overhead from avoidable incidents (appeals, reports, escalations) that could have been prevented by earlier flow-level friction at the right moment.

Document-based verification methods, which extract the user's date of birth from government-issued IDs, offer a more robust way to confirm age accuracy.

In short: self-declaration is not protection. It is, at best, a low-assurance signal that can be part of a wider, risk-tiered system—but only when the stakes are genuinely low and the residual risk is acceptable.

Age gating and age verification are different capabilities

A useful way to think about this is that “age gating” is a UI pattern, while “age verification” is a control system.

Age gating typically means putting an entry barrier in front of a service or feature, often with self-declared age information. It can reduce accidental exposure, but it does not establish whether a user is actually above the age threshold.

Age verification (and the broader concept of age assurance) aims to establish a level of confidence that is aligned with risk. That confidence can come from multiple types of signals and methods—some higher friction, some lower friction—chosen in proportion to what could go wrong. The ICO explicitly describes age assurance as encompassing multiple techniques (including self-declaration, AI/biometric systems, technical measures, tokenised approaches, and hard identifiers), and emphasizes the need to match certainty to risk while protecting children’s rights. Common methods include credit card verification, which leverages the association of credit cards with adult status for convenience, though it can be bypassed or exclude users without credit cards. Document-based verification often relies on government-issued IDs such as a driver's license, which provides high accuracy in confirming a user's date of birth but may raise accessibility and privacy concerns.

The most practical model for social platforms in 2026 is risk-based age assurance, because it maps cleanly onto how platforms actually work:

- Not every surface has the same risk. A “public profile + open DMs” experience is different from a “private friend graph” experience. A general feed is different from a mature-content feed. Monetization features add different incentives and abuse patterns.

- Not every user needs the same friction. Many adults can be passed quickly; a smaller set of borderline or high-risk sessions can be stepped up.

- Not every jurisdiction expects the same thing. Some rules emphasize “reasonable steps,” others specify “highly effective” methods; many emphasize proportionality.

Robust verification processes should ensure that a valid ID is used to confirm user identity and age, supporting compliance with legal requirements and improving accuracy.

This is also where automation becomes essential. Ofcom’s framing of “highly effective” age assurance is explicitly designed to be technology-neutral and future-proof—meaning platforms need scalable, repeatable, measurable processes, not manual exceptions.

Facial recognition technology in age verification: promise and pitfalls

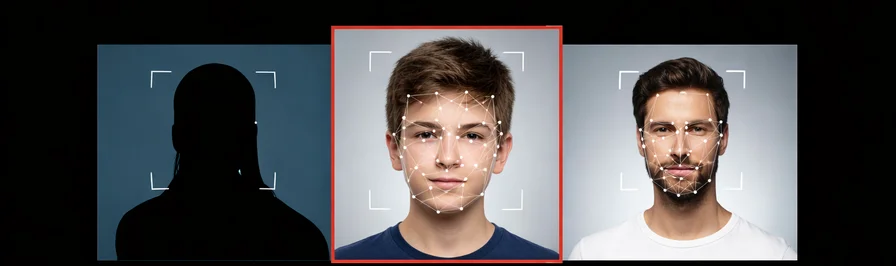

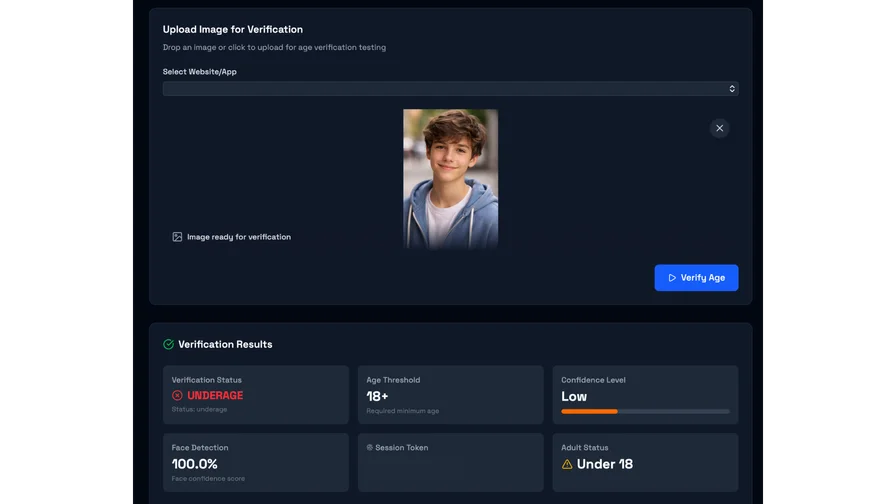

Facial recognition technology has rapidly become a cornerstone of modern age verification systems, especially as social media platforms and online services seek more reliable ways to verify a user’s age. By leveraging facial age estimation, these systems analyze facial features—such as skin texture, bone structure, and other biometric markers—to estimate whether a user falls within a certain age bracket. The Age Verification Providers Association has recognized the growing adoption of facial recognition as a verification method, noting its potential to streamline online age verification while helping platforms meet legal and regulatory requirements.

One of the most compelling advantages of facial recognition in age assurance methods is its ability to deliver a frictionless user experience. Instead of requiring users to upload sensitive personal information or government issued IDs, facial age estimation allows for quick, real-time verification—often with just a selfie or a brief camera scan. This is particularly valuable for social media platforms and online spaces where minimizing barriers to entry is crucial, yet there is a pressing need to restrict access to age restricted content and prevent minors from creating social media accounts or accessing harmful material.

However, the promise of facial recognition technology comes with significant pitfalls. Accuracy can vary across different demographics, with studies showing that age estimation algorithms may be less reliable for people of color, individuals with disabilities, or those whose facial features do not align with the data used to train these systems. This raises concerns about fairness and the risk of inadvertently excluding legitimate users or failing to protect children in vulnerable groups.

Privacy implications are another major concern. Facial recognition systems inherently process biometric data, which is considered highly sensitive personal information. If not properly secured, this data can be susceptible to identity theft or unauthorized access. Regulatory bodies have started to address these risks: for example, the Supreme Court and other authorities have highlighted the need for robust safeguards around biometric data collection and storage. In the U.S., laws like the Children’s Online Privacy Protection Act (COPPA) require parental consent before collecting data from children under 13, but facial recognition technology can sometimes circumvent traditional consent mechanisms, underscoring the need for more robust and transparent age verification systems.

To address these challenges, age verification providers are developing privacy-first age assurance technology. Some systems now use an age bracket signal, confirming only that a user is above a minimum age without revealing their exact age or storing unnecessary data. Others integrate verification with government issued IDs—such as a driver’s license or national ID—while ensuring that only the relevant age information is processed and retained. These innovations aim to balance the need to protect children and restrict access to age restricted content with the imperative to safeguard users’ privacy and free speech rights.

Emerging approaches, such as operating system-level age verification (as proposed in Colorado), seek to shift the responsibility for age checks from individual app developers and online platforms to the device or operating system itself. While this could streamline the user experience and reduce the risk of identity theft, it also raises new questions about mass surveillance period and the potential for government overreach into users’ online activities.

Ultimately, the integration of facial recognition technology into age verification systems offers both promise and pitfalls. When designed with privacy, security, and fairness at the forefront, these systems can help social media platforms and online services verify a person’s age, prevent minors from accessing inappropriate content, and protect children in digital environments. However, ongoing vigilance is required to ensure that the use of biometric data does not compromise sensitive personal information, undermine free speech, or expose users to new risks. As the Free Speech Coalition and other advocacy groups have emphasized, any verification method must strike a careful balance—protecting young people and upholding free speech rights, while respecting the privacy and security of all users.

Privacy-first age assurance is now a hard requirement, not an aspiration

Age assurance is inevitably about personal data. But “privacy-first” does not mean “no data.” It means minimizing data, limiting purpose, and designing the system so that the platform learns only what it needs to know.

In Europe, privacy expectations have become unusually explicit. The EU age verification blueprint is described as enabling users to prove they are over 18 without sharing other personal information, positioning privacy-preserving age verification as a reference standard rather than a bespoke, platform-by-platform invention. However, users often express concerns about their own privacy when submitting personal data for age verification, worrying about how their information may be used or stored by third parties.

The European Data Protection Board has also made the privacy stakes unmistakable. Its 2025 statement on age assurance emphasizes that age assurance should be risk-based and proportionate, “least intrusive” among available options while still effective, and that age assurance often triggers the need for a Data Protection Impact Assessment because of the potential high risk to rights and freedoms. The EDPB further warns that age assurance should not become a backdoor for identifying, locating, profiling, or tracking people for unrelated purposes.

In the UK, the ICO’s audit framework reinforces similar principles in more operational language: collect the minimum information needed, consider how age assurance could increase intrusive collection, avoid sole reliance on self-declaration where risk exists, and use “waterfall” techniques (combining approaches) and “age buffers” to route borderline users to stronger checks.

Even technically, the direction is clear. National Institute of Standards and Technology notes that age estimation algorithms can offer a way to control access to age-restricted activities “without compromising privacy,” while also documenting that algorithm performance varies, can be influenced by demographics and image quality, and requires ongoing evaluation. Some document-based or manual verification methods can be time consuming, as manual checks or AI analysis may lead to lengthy procedures that impact user experience and efficiency.

This is where Agemin’s product positioning fits naturally: privacy-first age verification that is designed to minimize data collection while still enabling strong age assurance at scale.

Based on Agemin’s published materials:

- Agemin emphasizes fast verification flows that do not require ID uploads or credit cards, using a live selfie or email-based method as primary options.

- Its facial age estimation product is described as using a live selfie with liveness checks, with claims that it processes only the minimum data needed and does not transmit or store the user’s facial image, and that retention policies can be configured to align with regulatory obligations.

- Its developer documentation describes a two-step flow (frontend verification followed by backend validation), highlights server-side validation as a security requirement, and states that “no personal data is stored by default” with “biometric data deleted after verification,” alongside security claims such as ISO-related certification language.

- Agemin also positions “step-up” logic: starting with a lighter check and escalating only when higher assurance is needed, with controls over retention and regional rules.

The strategic takeaway for social platforms is simple but demanding: if age verification becomes a blanket ID-check requirement for every user, the UX and privacy costs become unacceptable. If it becomes a risk-based, privacy-by-design system, it can reduce harm andreduce friction—especially when paired with clear appeals, accessibility support, and transparent disclosures.

The business case is about resilience, not just compliance

If you frame age verification as “compliance overhead,” you will implement it too late and too narrowly.

In 2026, strong age assurance is better understood as part of platform resilience: protecting growth, protecting monetization, and reducing catastrophic safety incidents that can reshape the roadmap overnight.

Regulatory enforcement is no longer hypothetical. The UK has demonstrated a willingness to fine services over inadequate age checks, and EU regulators have moved from guidelines into investigative actions requesting details on age verification and age assurance systems from major services. In the EU, investigations into adult platforms under the Digital Services Act have explicitly focused on risks to minors linked to the absence of effective age verification measures—reinforcing that self-declared age confirmation is not treated as an adequate control in high-risk contexts.

There is also brand logic. Advertisers and partners increasingly want to know whether a platform can credibly prevent underage access to adult content, gambling-like features, or harmful commercial practices. The European Commission’s guidelines explicitly mention protecting minors from harmful commercial practices and addictive behaviors, not only from illegal content. So a platform that can demonstrate effective age assurance, appropriate defaults for minors, and strengthened recommender governance is not just “safer”—it may be materially more attractive to brand partners operating under their own risk constraints.

And there is a cost logic. Large-scale reporting systems for child exploitation are strained, and volumes remain enormous. When a platform can reduce the number of minors exposed to high-risk surfaces upfront, it reduces downstream caseload: fewer crisis escalations, fewer complex appeals, fewer high-stakes edge cases where moderators must make irreversible decisions under time pressure.

Implementation choices that matter for product and Trust and Safety teams

Age assurance fails most often when it is treated as a single product requirement—“add a check at sign-up”—instead of a system that evolves with features, threats, and regulations.

A robust implementation in 2026 typically combines timing strategy, risk-tiered flows, and measurement.

Timing strategy is not one-size-fits-all. The EU guidelines explicitly endorse a risk-based approach: platforms differ, so measures should be proportionate and grounded in children’s rights and privacy-by-design. In practice, many platforms choose one of three approaches (or a hybrid):

- Verify at sign-up for services that are clearly age-restricted or where underage access creates immediate safety risks.

- Verify at “sensitive feature access” (for example: public posting, DMs, live streaming, mature content feeds, creator monetization, or exposure to certain recommendation surfaces).

- Verify at “risk triggers” (for example: anomalous behavior, repeated reports, suspected age misrepresentation, or geographic policy changes).

Risk-tiered flows are where privacy and UX are won or lost. The ICO explicitly recommends “waterfall” techniques (combining methods) and using an “age buffer” so borderline users complete an additional check. The EDPB similarly emphasizes least-intrusive measures that are still effective and warns against unnecessary tracking or profiling. Parental controls can also serve as an additional layer of protection alongside age verification, helping to restrict access to inappropriate content and manage online activity.

In concrete terms, that often means:

- A low-friction age estimation step for the majority of users (with an age buffer around the threshold).

- Step-up verification for borderline outcomes or high-risk features.

- A clear, accessible appeal path and human intervention when automated decisions risk excluding legitimate users or disproportionately affecting particular groups.

Fairness and accessibility cannot be bolted on later. NIST has documented that age estimation accuracy can differ based on image quality, gender, region of birth, and age, and that error rates can be higher for certain groups—meaning threshold design and escalation logic matter. The ICO’s children’s code guidance also frames age assurance through children’s rights, explicitly calling out non-discrimination risks and the need to mitigate bias and enable correction of inaccurate assessments.

This is also where Agemin’s integration posture is relevant for product and engineering leaders. Agemin’s documentation describes a developer flow built around a user-facing SDK step and a backend validation step, emphasizing server-side verification as the security control that prevents tampering. Its social media solution positioning also emphasizes adapting flows to local rules by detecting user location and supporting step-up “authentication when needed” for sensitive actions.

Continuous compliance is not optional anymore. Some regimes require recurring assessment or ongoing review expectations. Ofcom’s age-check guidance references recurring children’s access assessments, and the EU’s approach ties child protection to ongoing risk-based measures (including recommender governance). Australia’s framework similarly emphasizes “reasonable steps,” regulatory guidance, and penalties—meaning platforms should be prepared to demonstrate what they did, why it was proportionate, and how it is kept current.

Conclusion: age verification is becoming the new baseline of responsible social media

In 2026, protecting minors online is no longer a peripheral concern. It is increasingly treated as a defining expectation of credible, sustainable digital platforms—by regulators, by users, and by business partners.

That does not mean every platform must adopt the same method everywhere, for every user. The stronger pattern—across EU guidance, UK online safety enforcement, and privacy regulators’ statements—is risk-based age assurance: do what is proportionate, make it effective, and make it privacy-preserving.

Basic age gates and self-declaration can no longer carry that weight. Regulators have said so, enforcement actions reflect it, and the threat landscape—exacerbated by scale and synthetic media—has made the limitations obvious.

Agemin fits this moment with a product story built around AI-powered, privacy-first age verification that emphasizes minimal friction for legitimate users, risk-tiered step-up where necessary, and integration patterns designed for real-world product teams shipping across mobile and web.

Want to learn more?

Explore our other articles and stay up to date with the latest in age verification and compliance.

Browse all articles