Mandatory Age Verification for Adult Websites: Meeting New Global Regulatory Demands

Adult content compliance is undergoing a structural reset. Across multiple jurisdictions, regulators are moving from “best‑effort” nudges and self-declared age gates to mandatory, technologically enforced age assurance—with explicit expectations around effectiveness, auditability, and privacy. Companies and businesses across digital content, social media, and tech are directly affected by these regulatory changes.

What’s changing isn’t just the volume of regulation; it’s the assignment of responsibility. Platform operators are increasingly treated as the control point—expected to prevent minors from accessing pornography or other adult material, not merely warn them away. The idea behind recent legislation, such as the California age verification law, is to make developers and OS providers responsible for verifying user ages to ensure compliance with legal requirements.

And the simplest “Are you over 18?” checkbox? In many regimes, it is now explicitly framed as inadequate.

Age verification technology is often criticized for being inaccurate and privacy-invasive, raising concerns for both users and the businesses required to implement these systems.

The global shift toward mandatory age verification

Two pressures are converging: an intensified political demand to protect minors online, and a growing regulatory conviction that voluntary, low-friction age gates do not work in practice. Alongside this, there is a strong emphasis on safeguarding young people, as these regulations directly affect their access to digital content, online safety, and mental health. That combination is visible in the UK’s Online Safety Act implementation (with “highly effective” age assurance positioned as a cornerstone), in French enforcement activity specifically targeting pornography access, and in EU-level enforcement and guidance under the Digital Services Act.

In France, for example, the media regulator Arcom has cited audience measurement indicating that a large share of children access pornographic sites monthly, and it uses that evidence base to justify stronger verification and active oversight.

In the UK, Ofcom has been blunt about the direction of travel: age assurance must be “highly effective,” and services within scope are expected to implement robust checks by fixed milestones—rather than relying on self-declaration.

Even where the regulatory trigger is not “pornography” alone, the same concept is spreading: regulators are designing regimes where high-risk services must actually verify age (or block access), and gatekeepers—platforms, distribution channels, and sometimes even app stores—become enforcement leverage. Age verification is essential for preventing minors from accessing inappropriate content and ensuring compliance with laws like COPPA and DSA.

New rules reshaping adult content access

The compliance landscape is now best understood as a patchwork with momentum: different legal instruments, different enforcement models, but a consistent destination—mandatory age verification with real penalties.

United States state-level mandates and the post–Supreme Court inflection point

In the U.S., age verification for adult sites has largely advanced via state statutes. Several states’ laws share a recognizable pattern: if a website contains a defined “substantial portion” of material harmful to minors, the operator must perform “reasonable” age (and often “age and identity”) verification, with civil liability exposure for failure. ID checks and ID verification, including the use of passports, are increasingly being considered or required as secure methods for verifying users' ages and identities, especially to comply with legislative requirements and address privacy and data security concerns.

A pivotal legal development arrived on June 27, 2025: Supreme Court of the United States upheld a Texas age-verification law for pornographic sites in Free Speech Coalition, Inc. v. Paxton. This decision materially reduced the “will this survive constitutional scrutiny?” uncertainty that had previously slowed enforcement confidence.

Concrete examples illustrate how these laws are drafted and enforced:

- Virginia codifies a civil-liability model requiring age and identity verification for sites above a “more than 33 and one-third percent” threshold, and creates exposure for damages and attorney fees if a minor gains access.

- Louisiana requires reasonable age verification, and explicitly restricts retention of identifying information after access is granted (a notable privacy-design signal embedded directly into statute language). In 2022, Louisiana became the first state to require age verification for accessing adult websites.

- Arkansas enacted SB66 (Act 612) targeting online distribution of harmful material and requiring “reasonable age verification.”

- Utah SB0287 creates obligations and liabilities tied to distribution of pornography or material harmful to minors.

- Tennessee SB1792 (“Protect Tennessee Minors Act”) is another example of legislating age verification obligations (with litigation and injunction dynamics varying by state and time).

- Florida legislative analysis documents a “reasonable age verification” approach, and it explicitly discusses deletion expectations and third‑party separation in verification flows.

The short version: state-by-state variability remains, but the legal risk of “doing nothing” has increased—and the Supreme Court’s posture in 2025 accelerated that trajectory. The Digital Economy Act 2017 in the UK is an example of early mandatory age verification legislation for websites publishing pornography on a commercial basis.

United Kingdom Online Safety Act and “highly effective” age assurance

The UK regime is unusually explicit about standards and timelines. Ofcom’s guidance frames robust age checks as a cornerstone of the Online Safety Act, and it differentiates between age assurance methods that can be “highly effective” versus those that are not.

Key milestones are clearly signposted in Ofcom materials, including expectations that pornography services implement age checks by July 2025 at the latest, with phased duties across different service types.

The enforcement teeth are also not theoretical. Ofcom describes enforcement actions and maximum penalty levels (up to 10% of qualifying worldwide revenue or £18 million, whichever is greater).

European Union Digital Services Act and escalating enforcement

At EU level, the Digital Services Act (DSA) establishes a child protection obligation for online platforms accessible to minors, reinforced by Commission guidelines that embed “safety and privacy by design” and a risk-based approach.

Importantly, the European Commission has demonstrated enforcement intent in the adult-content context. In May 2025 it opened formal proceedings against major pornographic platforms for suspected DSA breaches tied to protecting minors—explicitly focusing on whether platforms relied on ineffective age gating.

For large platforms, the Commission also communicates high-end penalty exposure: fines of up to 6% of global annual turnover, along with corrective measures.

France, Germany, Spain, and selected global signals

France is now one of the clearest examples of active, operational enforcement. Arcom issues notices, monitors compliance, and can pursue blocking or delisting outcomes for non-compliant pornography services—pushing age verification from “policy” into day-to-day platform operations.

In Germany, youth protection has long relied on “closed user group” approaches and detailed evaluation criteria for age verification systems (AVS). The Commission for the Protection of Minors in the Media (KJM) publishes criteria for evaluating AVS concepts, and European monitoring reports document KJM’s use of blocking and compliance expectations (including closed user groups for adult material).

In Spain, government-backed mechanisms to verify age for pornography access have been publicly signaled through digital wallet approaches, reflecting a broader European trend toward standardized, privacy-respecting proofs of age.

In South Korea, age assurance has also appeared in national policy approaches, demonstrating that “age gating” debates and enforcement are not limited to Western jurisdictions—even if the legal structures differ.

California’s Digital Age Assurance Act requires operating system providers to ascertain the age of users setting up the OS, making certain content and services subject to age verification requirements.

Finally, beyond Europe and the U.S., regulators are increasingly willing to impose age controls as a condition of access for “high-risk” online services. Australia’s regulator, for instance, has enforced social media minimum age duties and is extending age-based restrictions into other categories through its online safety toolkit.

What regulators expect from age verification systems

Despite jurisdictional differences, regulators are converging on a shared concept: age verification must be effective in practice, not merely present in UI.

Reliability standards and anti-circumvention expectations

Ofcom provides one of the clearest “inspection frameworks” for age assurance. It states that highly effective age assurance should meet four criteria—technically accurate, robust, reliable, and fair—and it explicitly lists self-declaration of age as not capable of meeting the standard.

That same guidance emphasizes real-world robustness: services should identify and mitigate circumvention methods that are easily accessible to children and reasonably expected to be used.

The practical implication is uncomfortable but unavoidable: if your system is trivially bypassed (for example, through weak gating placement or predictable loopholes), you are likely out of step with regulator expectations—even if you technically “have an age gate.”

“Front gate” design: checking before content is visible

One design detail now carries regulatory weight: where the check happens.

The most basic form of age verification is to require a person to input their date of birth on a form, but this method is easily bypassed.

Ofcom’s position is that, for dedicated pornography services, highly effective age assurance should be implemented at the point of entry—or the provider must ensure no harmful content is visible before the age check completes. In other words: no preview thumbnails, no explicit feed, no “blurred but recognizable” content before verification.

This is a sharp departure from legacy patterns where content is visible, and the site “asks nicely” after exposure is already possible. Regulators are increasingly unwilling to accept that sequencing.

Privacy and data minimization are no longer optional

Critically, regulators are tightening age verification while simultaneously warning against turning adult-content browsing into identity surveillance.

France’s data protection authority, CNIL, frames online age verification as inherently privacy-sensitive because confirmed identity can be linked to online activity, which—when the content is adult—becomes especially sensitive. CNIL therefore pushes toward models that minimize what the adult site itself learns about the user.

CNIL also explicitly rejects some common “shortcuts,” including simple declarations of being over 18, and recommends trusted third-party involvement to avoid direct transmission of identifying data to the pornographic site.

At EU level, the European Data Protection Board situates “age assurance” inside the broader European child protection framework and signals that age assurance must be aligned with data protection requirements and rights-based design—rather than treated as a compliance hack.

Auditability and demonstrability

A modern compliance posture requires more than “we do age checks.” Regulators increasingly expect you to demonstrate:

- what method is used,

- how it performs (including bias/fairness considerations),

- how it resists circumvention,

- how it is monitored and improved,

- and how privacy risks are mitigated.

Ofcom’s technical guidance, for example, describes ongoing monitoring, KPI-based reliability checks for AI/ML methods, and periodic reassessment as technologies evolve.

The compliance risks of doing nothing

“Wait and see” is quickly becoming the high-risk strategy—financially, operationally, and reputationally.

Regulatory fines and enforcement escalation

In the UK, Ofcom states that failure to comply can lead to enforcement actions and, in the most serious cases, fines up to £18 million or 10% of qualifying worldwide revenue (whichever is greater).

In the EU, the Commission communicates that large platforms may face fines of up to 6% of global annual turnover for DSA non-compliance—and it has already opened investigations into pornographic platforms specifically over suspected failures to protect minors.

In France, Arcom has demonstrated active enforcement behavior: formal notices can be followed by blocking or delisting measures for non-compliance.

Blocking, geo-restrictions, and forced market exits

Service restriction orders, ISP blocking, or de-indexing are no longer speculative tools. They are being operationalized in multiple regimes—sometimes as a last step, sometimes as the default enforcement lever when a service won’t comply. Non-compliant sites can be blocked from access as part of enforcing age restrictions and content controls, preventing minors from reaching age-restricted online content.

In practice, that can translate to abrupt revenue loss in a jurisdiction. And the enforcement target is expanding: in France, Arcom has described shifting from the largest sites to smaller-audience sites, signaling a broadening enforcement perimeter, not a narrowing one.

Civil liability and legal exposure

Several U.S. state laws incorporate private enforcement through civil liability. Virginia’s statute, for example, creates civil liability for damages arising from a minor’s access and provides for attorney fees and costs. Louisiana’s statute similarly creates liability and includes explicit rules about not retaining identifying information post-verification.

This matters because “enforcement” may not only come from regulators. It can come from litigation—especially in jurisdictions that are deliberately designed to be privately enforceable.

App store distribution and account-level age gating

Even if you are not an “adult website” in the classic sense—if you distribute mature content via apps, you are exposed to an additional layer of gating.

Apple announced that starting February 24, 2026, it will block users in certain countries from downloading apps rated 18+ unless they have been confirmed to be adults through “reasonable methods,” with the App Store performing confirmation automatically (while warning developers they may still have separate obligations to confirm adulthood).

Google similarly describes account-level age verification requirements for users creating new app store accounts in applicable U.S. states, driven by state regulations, and provides APIs intended to help developers meet obligations.

To access age-restricted content or services, users may be asked to confirm their age with a valid government ID, mobile phone number, a selfie, or a valid credit card. Credit card verification is a common method for age verification, though it can present challenges such as minors attempting to bypass the system or fraudulent use of credit card information.

For compliance leaders, this is a key trend: gatekeepers are being pulled into age assurance—meaning non-compliance can cascade into distribution friction and growth constraints.

Payment ecosystem pressure

For adult platforms operating with card payments, the payment ecosystem introduces another compliance axis.

Credit card verification is often used to restrict access to adult entertainment websites, serving as a common method for age verification in the entertainment sector.

Mastercard publicly reiterates that any adult content website supported by institutions on its network must adhere to standards requiring controls to monitor, block, and remove unlawful content—and that these standards demand confirmation of age and consent from anyone in content published on such sites.

Mastercard’s published rule documentation also reflects requirements for adult merchants to verify identity and age of persons depicted and provide supporting documents upon request.

The operational reality is straightforward: if your compliance posture cannot satisfy both regulators and payment network expectations, your ability to monetize is at risk—sometimes faster than regulators can even act.

The technical challenge: compliance, privacy, and UX in the same funnel

Age verification is not just a legal box to tick. It is now a core part of product architecture—and it creates a real three-way tension.

Privacy risk is intrinsic, not accidental

CNIL articulates the central privacy challenge plainly: proving age often implies knowing identity, and identity can be linked to browsing activity, which becomes particularly sensitive in the adult-content context.

This is why privacy-by-design assumptions matter. Systems that directly collect identity documents on an adult site, or that retain verification artifacts longer than necessary, magnify breach impact and heighten regulatory scrutiny.

Circumvention is easy if you design for it

Many age verification models are bypassable by design. CNIL explicitly notes that VPN use can allow minors to bypass country-based age verification or site blocking, and it highlights the difficulty of ensuring the person using a proof is the person who obtained it. Additionally, minors may attempt to circumvent age verification systems through fraudulent means, emphasizing the need for robust anti-circumvention measures.

That observation aligns with UK expectations: Ofcom’s guidance pushes services to mitigate circumvention methods that are likely to be used by children.

Fairness and bias are not side issues anymore

Where biometric or AI-based methods are used, fairness is now part of the compliance definition, not a “nice-to-have.”

Ofcom lists “fairness” as one of four criteria for highly effective age assurance and discusses the need for testing against diverse populations.

Measurement work (including by the UK’s privacy regulator) highlights that biometric age estimation systems can be adversely affected by demographic factors such as skin tone bias—reinforcing why performance evaluation, monitoring, and fallback paths are essential.

You still have to ship a product people will use

Adults will notice changes. Ofcom itself acknowledges that, as services introduce age assurance, adult users will experience a different access flow.

This is the conversion dilemma: stronger checks can cause drop-off, yet weaker checks can fail legal standards. The only sustainable path is to design verification as a tiered, risk-based interaction—lightweight when possible, more stringent only when necessary, and demonstrably privacy-aware.

Modern age verification technologies and privacy-first patterns

The newest generation of age verification is less about “one method” and more about composable assurance: combining fast checks, anti-spoofing defenses, cryptographic proofs, and step-up workflows.

Facial age estimation with liveness and challenge thresholds

Ofcom explicitly recognizes facial age estimation as a method capable of being highly effective (when implemented properly and evaluated against the criteria). It also recommends a “challenge age” approach when age estimation is used—i.e., applying a buffer that forces step-up verification for users near the age boundary.

Facial age estimation uses machine learning to estimate the user's age by analyzing their facial features in a selfie image. Liveness detection and presentation attack detection (PAD) are core components of spoof resistance in biometric flows. ISO/IEC 30107-3 establishes principles and methods for assessing PAD mechanisms, reflecting how “anti-spoofing” can be treated as testable, reportable performance—rather than marketing language.

Standards and evaluation work from National Institute of Standards and Technology similarly highlight that system performance and demographic differentials can arise not only from core algorithms but also from components such as presentation attack detection (liveness/spoofing defenses).

Anonymous age tokens and selective disclosure credentials

A major privacy-first pattern is to verify an attribute, not a full identity.

The European Commission’s blueprint describes an approach where proof of age can be issued from trusted sources, and—crucially—the link between user and proof provider is cut after issuance, with the site receiving an anonymous proof that does not reveal identifying information.

The EU Digital Identity Wallet’s age verification use case similarly emphasizes selective disclosure: proving you are above a threshold age without revealing full birthdate or other identifying information.

At the standards layer, the World Wide Web Consortium Verifiable Credentials model is explicitly designed for cryptographically verifiable, privacy-respecting credential exchange—supporting selective disclosure and minimizing unnecessary data exposure.

Trusted third-party separation and “don’t let the adult site collect IDs”

CNIL strongly favors models where the pornographic site does not directly collect identity documentation, and it recommends compartmentalization—an issuer that knows identity but not which site is visited, and a relying party (the adult site) that receives only proof of eligibility.

This “separation of powers” is quickly becoming the emerging standard for privacy-first age verification: prevent minors, protect adults’ privacy, and shrink breach fallout.

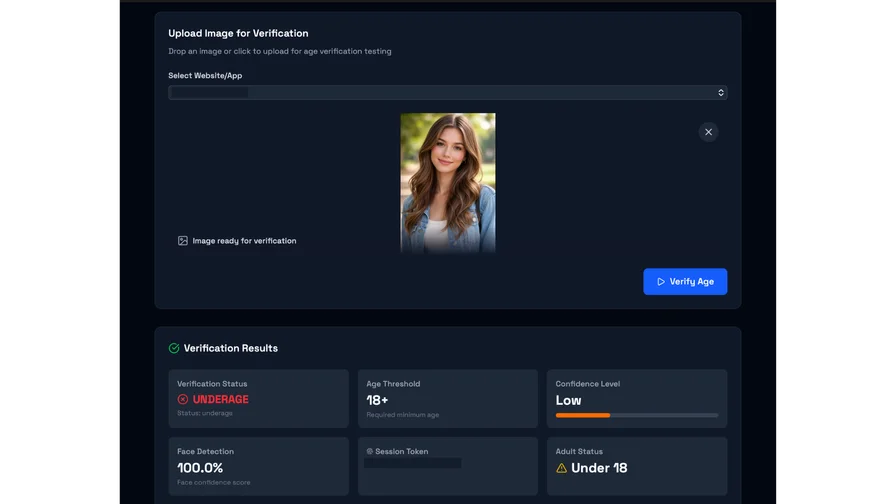

Where Agemin fits in a privacy-first stack

If you choose to operationalize privacy-first age verification using an external identity verification and age assurance provider, Agemin positions itself around fast, privacy-first verification via live selfie age estimation or email-based estimation, explicitly emphasizing “no ID uploads” and reduced friction.

From an implementation standpoint, Agemin also publishes developer documentation describing rapid setup, claimed model accuracy metrics (including MAE), and security posture claims (including ISO 27000 certification as stated in its docs).

For adult content use cases specifically, Agemin describes a tiered approach—start with the “lightest acceptable check,” step up only when needed, and orchestrate workflows via API/SDKs and webhooks.

And if your broader risk program includes KYB/KYC/AML-linked workflows, Agemins own materials describe integration with those compliance systems as part of an API-centered monitoring and auditing posture.

Implementing age verification at scale and preparing for what’s next

At high volume, the winners will not be the platforms with the “strictest-looking” prompt. They will be the platforms with verification that is defensible, measurable, privacy-minimizing—and operationally calm.

A practical implementation blueprint for Trust & Safety and Compliance

Start by mapping jurisdictions and service scope, then design toward the strictest common requirements you realistically face (often: “front gate” age assurance for pornography, plus demonstrable privacy protections). Ofcom’s materials are useful here because they specify criteria and explicitly reject weak methods like self-declaration.

Next, treat age verification as a policy engine, not a single flow:

- Risk-tier the user journey (e.g., casual browsing vs. explicit video playback vs. uploads vs. monetization).

- Apply challenge thresholds and step-up verification for edge cases.

- Build circumvention monitoring (especially VPN/geolocation anomalies) without turning the system into pervasive surveillance.

Operationalize evidence. Ofcom’s guidance stresses evaluation, monitoring, and periodic review, including KPI-based checks for AI/ML methods and bias-minimization/fairness testing. This is the difference between “we added a vendor” and “we can defend our controls in an audit.”

Finally, build privacy into the architecture:

- minimize data collection,

- minimize retention,

- prefer attribute proofs over identity whenever possible,

- and avoid placing identity documents directly in the adult site’s blast radius—consistent with CNIL’s recommendations and EU blueprint direction.

The near-term future: standardization, gatekeepers, and rising enforcement

Expect more enforcement, not less.

Arcom’s trajectory—moving from the biggest pornography services to smaller ones—signals that enforcement scope tends to widen over time once infrastructure and legal authority exist.

In the EU, the Commission is investing in standardization primitives (guidelines, investigations, and an age verification blueprint intended to be privacy-preserving and harmonized across Europe), suggesting a future where “build your own checkbox” becomes the least defensible option.

Gatekeepers are also moving. Apple’s 2026 step to block downloads of 18+ rated apps unless users are confirmed adults—paired with developer-facing age-range APIs—illustrates how the compliance perimeter is shifting upward into distribution layers.

And outside Europe and the U.S., Australia shows how quickly age assurance can expand from one category (social media accounts) to broader “high-risk” services and even AI systems—with large fines tied to non-compliance.

In short: age verification is becoming a durable, core component of digital platform compliance infrastructure—especially anywhere explicit content is accessible, hosted, or monetized. However, age verification systems can create dangerous new forms of surveillance and censorship. These laws have been criticized for creating invasive monitoring and censorship tools that threaten privacy and the free and open internet.

Want to learn more?

Explore our other articles and stay up to date with the latest in age verification and compliance.

Browse all articles