Age Verification for E-Commerce: Keeping Age-Restricted Sales Compliant Without Hurting Conversions

Selling age-restricted products online is no longer just a “put up an 18+ checkbox and move on” problem. Regulators increasingly expect evidence-based age assurance, and they are explicit that self-declaration (entering a birthday, ticking “I’m 18+”) is not, on its own, a reliable control. Age gates, which are simple self-attestation methods used to restrict access to age-sensitive content or services, are commonly used but are often bypassable and insufficient as a standalone age verification method.

At the same time, the strongest age checks historically came with a real cost: extra steps, awkward ID uploads, manual review queues, and privacy anxiety at the exact moment you’re trying to close a sale. That is a recipe for abandonment in an environment where checkout friction already causes major drop-off across e-commerce. Due diligence in implementing robust age verification processes is essential to meet regulatory requirements, reduce risk, and ensure comprehensive compliance.

The good news is that the compliance–conversion trade-off has shifted. Modern age estimation and verification can be deployed in a layered, risk-based way—fast for most legitimate customers, tougher only when needed, and designed around data minimisation so you don’t collect more identity data than the law (or your customers) actually require. Privacy and data security are significant concerns in age verification systems, as they require handling sensitive personal information.

Introduction to Age Verification

Age verification is the process of confirming a user’s age before granting access to certain products, services, or content online. As digital environments expand and more services move to online platforms, the need for reliable age verification systems has never been greater. These systems are essential for protecting minors from accessing inappropriate or harmful material and for helping businesses comply with increasingly strict legal and regulatory requirements. Whether it’s online gaming, adult entertainment, or e-commerce, robust online age verification is now a baseline expectation for responsible operators. By implementing effective age verification mechanisms, businesses not only meet their legal obligations but also demonstrate a commitment to ethical practices and user safety, helping to protect minors in online spaces.

Why age verification in e-commerce is no longer optional

Across major markets, age assurance is moving from “best practice” to enforceable expectations. In the United Kingdom, for example, the regulator Ofcom has published “highly effective age assurance” guidance under the Online Safety Act framework and has announced an enforcement programme with a stated deadline for pornography services to implement age checks. Businesses that sell age restricted goods or services must implement age verification systems to avoid fines and legal ramifications.

That same regulatory posture is spilling into adjacent “offline-danger” categories that already carry age limits. One clear illustration is online knife sales: a UK government snapshot describes a move toward a “two-step” age verification system for knives—age checks at point of sale and again at delivery—and it also highlights tougher penalties for selling bladed articles to under-18s.

In the United States, distance selling of tobacco and vaping products has been tightened through federal rules that directly address the online channel. The PACT Act regime (as summarised by Public Health Law Center) describes age and identity verification at purchase and ID checking plus signature at delivery for covered delivery sales.

Pressure also comes from infrastructure partners, not just lawmakers. Payment providers and commerce platforms routinely restrict, prohibit, or condition support for categories such as alcohol, tobacco, vaping, gambling, and adult content; losing access to core payment rails can quickly become an existential risk for a regulated merchant.

The result: age verification is becoming a foundational control—part compliance mechanism, part operational prerequisite—rather than a superficial UX banner you can treat as optional. Businesses face legal ramifications if they fail to implement effective age verification systems, particularly in industries selling age-restricted products.

Age Restricted Goods and Services

Age restricted goods and services refer to products and content that are legally available only to individuals who meet a specific minimum age requirement. This includes items such as alcohol, tobacco, vaping products, gambling services, and adult content. For businesses that sell or provide access to these age restricted goods, implementing effective age verification systems is critical to prevent minors from making purchases or accessing sensitive material. Age verification laws and minimum age requirements can vary significantly by country, state, and product category, and these regulations are evolving rapidly in response to new risks and technologies. Staying up-to-date with the latest legal requirements and deploying compliant age verification systems is essential for avoiding legal risks, protecting customers, and maintaining a responsible brand reputation.

The legal landscape e-commerce teams actually face

The hardest part for founders, product managers, and legal teams is not understanding that age restrictions exist; it’s that the rules differ by product and by jurisdiction, and they often impose obligations at multiple points (purchase, account creation, delivery). Many industries must comply with laws and regulations that mandate a minimum age threshold and require an age verification system to be implemented.

For regulated nicotine and tobacco, the US U.S. Food and Drug Administration explains that federal “Tobacco 21” rules set a minimum purchase age of 21 and require ID checks for younger-looking purchasers in retail contexts, while remote-sales enforcement discussions routinely focus on stricter independent age verification for online transactions.

For delivery sales of cigarettes, smokeless tobacco, and ENDS, the PACT Act summary indicates a two-point model: the delivery seller verifies age/identity at purchase, and the carrier checks ID and obtains a signature at delivery. This matters because it undercuts a common misconception—“the courier will handle it”—which is rarely sufficient as a sole control for higher-risk categories.

For alcohol, rules are famously patchwork in the US because state law varies widely; the Alcohol and Tobacco Tax and Trade Bureau publicly notes that state laws differ and that some states prohibit direct shipment to individuals. The National Conference of State Legislaturessimilarly documents state-by-state statutory approaches to direct shipment. Operationally, carriers then overlay their own requirements: FedEx describes mandatory adult signature services for US alcohol deliveries, with government-issued photo ID checks before handoff, and UPS requires adult signature services for spirits shipments.

In the UK, the picture is different but equally specific. The government’s Section 182 guidance (Licensing Act 2003) addresses remote alcohol sales directly: it treats online/telephone transactions as “sales,” but emphasises that alcohol is not “served” until delivery and that age verification must occur before service, with delivery staff responsible for ensuring age checks occur when appropriate.

Digital goods can carry their own age verification duties in certain regulated verticals. A clean example is remote gambling: the UK Gambling Commission states that online gambling businesses must ask customers to prove their age and identity before they gamble, and its licence conditions require age verification before a customer can deposit, access free-to-play, or gamble.

In the European Union context, another dimension appears: privacy and children’s online protection. The Digital Services Act requires online platforms accessible to minors to implement “appropriate and proportionate measures” to protect minors, and the European Commission has published guidelines on protecting minors online to support compliance with that duty. While DSA obligations focus on platform safety rather than checkout compliance, they contribute to a broader regulatory norm: age assurance should be effective, proportionate, and privacy-conscious.

Finally, a practical distinction that matters for defensibility:

- Age gating is typically a deterrent or warning (e.g., “Are you 18+?”).

- Age verification / age assurance is about reliably determining whether the user meets a minimum age threshold, using a verification method regulators will accept as meaningful to confirm whether a user meets the certain age required by law or regulation. Ofcom’s guidance is explicit that self-declaration alone is not “age assurance.”

The problem with traditional age checks

The oldest pattern—“enter your birthday” or click “I’m 18+”—has two structural failures.

First, it is easy to bypass, which makes it hard to defend as a serious control. Ofcom’s guidance lists “self-declaration of age” (including ticking a box to confirm you are 18+) among methods not capable of being “highly effective” on their own. Second, it can create a false sense of security inside the organisation (“we’ve handled age”), which becomes dangerous if enforcement arrives and your only artefact is a checkbox event in analytics.

The next “traditional” approach—manual ID upload—often swings too far the other way. Document-based methods require users to upload an id document, such as a physical id like a driver's license or passport, to verify their age. Methods like Optical Character Recognition (OCR) are used to validate government IDs by checking authenticity and extracting the birth date. It can be slow, intrusive, and disproportionate for many purchases, particularly when the legal requirement is “over 18/21” rather than “identify this person.” It can also create a heavier compliance footprint because biometric processing and identity documents raise higher data protection expectations.

Then there is the delivery-only model: “we’ll check ID at the door.” For alcohol, carriers and couriers do frequently require adult signature and ID checks, but regulators and policymakers openly debate whether point-of-sale controls must be strengthened for remote transactions, because delivery checks alone don’t prevent the attempted underage purchase in the first place. For tobacco/vapes, the PACT Act approach (purchase verification plus delivery ID/signature) again shows why “delivery only” is incomplete.

From a conversion standpoint, heavy-handed implementations also collide with known checkout behaviour. Baymard’s long-running research estimates that around 70% of shopping carts are abandoned on average, and its survey work shows that even one forced step—like account creation—drives noticeable abandonment for a meaningful minority of shoppers. Age checks that feel like account creation (or worse, like a compliance interrogation) predictably trigger that same behaviour.

The conversion vs compliance dilemma

Most regulated merchants feel trapped between two credible fears:

- Add friction, and you lose revenue today.

- Skip robust controls, and you risk fines, licence consequences, shutdowns by partners, or headline-level reputational damage later.

Implementing robust age verification not only helps achieve compliance but also builds user trust and demonstrates a commitment to responsible business practices.

What’s changed is the assumption that stronger age controls must look like heavyweight identity checks. Contemporary regulatory thinking is pushing toward outcomes (“highly effective” checks, fair and robust processes, and no underage access) rather than prescribing one exact method everywhere. Ofcom, for example, discusses age verification and age estimation, emphasises implementation quality, and explicitly considers usability (avoiding undue blocking of adults) as part of how providers should think about “highly effective” processes.

When implementing age verification, it is crucial to accurately confirm a user's age using methods such as document analysis, biometric data, database cross-checks, or third-party authentication systems. This ensures legal requirements are met and prevents underage access to sensitive or restricted content.

On the technology side, large public evaluations and standards efforts are maturing. National Institute of Standards and Technology runs ongoing evaluations of facial age estimation algorithms, framing them as benchmarks (not certifications) and documenting the technical variability that systems must handle. Meanwhile, government-led work such as the AustraliaAge Assurance Technology Trial describes a market shift toward “low-friction” age assurance methods, plus privacy-preserving and reusable approaches—explicitly treating usability and proportionality as first-order design requirements.

A risk-based approach to age verification allows businesses to adapt to varying legal requirements across regions, ensuring compliance while maintaining flexibility.

In other words, the trade-off isn’t gone—but it is no longer fixed. With a layered design, many customers can clear an age threshold quickly, while edge cases (near-threshold, higher-risk, or legally mandated scenarios) can be escalated to stronger verification.

Modern online age verification for e-commerce

Modern age assurance works best when you stop treating “age verification” as a single binary step and instead design a layered compliance strategy that incorporates a range of age verification methods, including both document-based and biometric approaches.

A useful baseline distinction (because teams often mix these up):

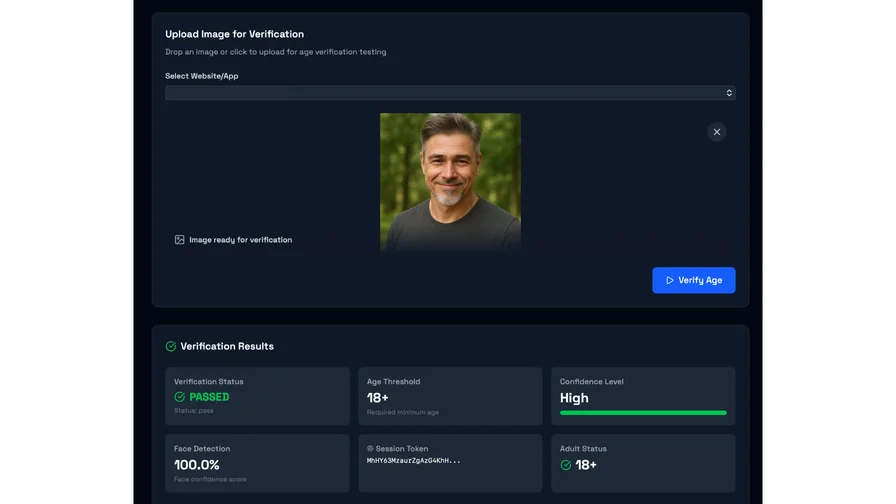

- Age estimation: predicts a likely age or age range using biometric or behavioural signals, typically to make a threshold decision (e.g., likely over/under 18) without needing a known date of birth. Facial analysis is commonly used in this context, where facial age estimation predicts a person's age using biometric cues like wrinkles or facial structure.

- Age verification: confirms age against a source of truth (validated date of birth) such as an official document or authoritative record—often required in higher-stakes contexts. Document-based age verification methods frequently involve scanning a driver's license or other government-issued ID, using OCR technology to extract relevant information such as the birth date for confirmation.

- Identity verification: establishes who someone is, not just whether they meet an age threshold; this is a different (often heavier) compliance category and is not automatically required just because something is age-restricted.

A practical “conversion-aware” pattern that shows up across regulatory guidance and trial evidence looks like this:

Most users pass through a fast, low-friction check (often age estimation), while users in a “buffer zone” near the threshold are escalated. The Australian trial is blunt on why: it argues it is a misunderstanding to expect age estimation to implement an exact age restriction without accepting a margin of error or using a buffer, and it notes that false negatives then become inevitable—so alternative methods are needed for correction.

This is where risk-based flows become useful in e-commerce:

- Use an initial check that is quick and privacy-minimising for the majority.

- Add liveness / anti-spoofing when you need to ensure the signal comes from a real, present human (not a photo, replay, or synthetic media). Biometric systems often require a liveness check to prevent fraud using static photos, and liveness detection is critical for preventing spoofing and presentation attacks during facial recognition and age estimation processes. Notably, NIST’s evaluation of age estimation algorithms explicitly states it is not an evaluation of a full age verification process and does not evaluate active attack detection mechanisms—so merchants should treat “age estimation accuracy” and “attack resistance” as related but distinct requirements.

- Escalate to stronger verification when regulation demands it (for example, the UK’s described knife-sale policy direction calls for official identity documents at point of sale), or when business risk signals suggest abuse.

Modern regulators also increasingly expect you to think about fairness and reliability. Ofcom, for instance, explicitly calls out that age assurance methods relying on AI/ML should be tested, monitored, and assessed for discriminatory outcomes (connecting “highly effective” to more than just raw accuracy).

In summary, modern online age verification employs a combination of age verification methods such as document scanning, AI-driven facial age estimation, database checks, and financial service verification to confirm user age while balancing privacy, accuracy, and compliance.

Where to implement age verification without wrecking UX

“Where do we put the check?” is not a minor implementation detail—it is the difference between a flow that quietly protects you and one that shouts “friction” at every legitimate buyer.

Regulatory guidance in adjacent domains provides a helpful mental model. For harmful content, Ofcom states that age assurance should be implemented at entry or that harmful content should not be visible before the user completes an age check; it also flags poor placement (content visible prior to completing a check) as a non-compliance risk in that context. While e-commerce is different, the principle travels well: don’t let the age check happen so late that you’ve already exposed the regulated inventory (or accepted payment) in a way you can’t defend. To grant access to age restricted content, it is essential to verify a user's age before allowing entry, often requiring users to provide identification, credit card information, or undergo verification through third-party age verification services or government ID checks.

For most e-commerce businesses, the practical options cluster into five touchpoints, each with a different UX and compliance profile:

- Site entry gating: strong signalling, simple to understand, but can harm SEO and bounce rates if done bluntly; it also verifies nothing if it’s just self-declaration.

- Product-page gating: more targeted; you gate only the regulated SKUs, reducing unnecessary friction for the rest of the catalogue.

- Pre-checkout checkpoint: often the “sweet spot” for conversion—after intent is clear, before payment friction piles up; also aligns well with rules that expect a meaningful purchase-time check.

- Account creation / first purchase: useful when you need a reusable session so customers don’t re-verify every time, but it must be designed carefully so it doesn’t look like forced registration (a known abandonment driver).

- Payment and delivery stage: important as a backstop (especially where carriers require adult signature and ID checks), but risky as the only control, particularly for categories where law or policy increasingly expects earlier verification.

Offering multiple age verification options at these touchpoints enhances accessibility and convenience for users.

A conversion-aware pattern many teams land on is “verify once, remember securely.” That means: trigger age assurance only when a customer interacts with age-restricted products, then maintain a session flag (for example, using a cookie, token, or account-level attribute) so browsing and repeat purchases feel normal.

Implementing a seamless age verification process helps reduce user friction and abandonment rates.

Age Gating

Age gating is a basic method of restricting access to age restricted content or services by asking users to self-declare their age or enter their date of birth. While age gating is simple to implement, it is widely recognized as an unreliable method for verifying age, as underage users can easily bypass these checks by providing false information. This approach does little to accurately verify a user’s age or prevent minors from accessing restricted content. In contrast, modern age verification systems use advanced age estimation techniques, such as facial age estimation, government issued ID verification, and credit card verification, to provide a much higher level of assurance. These systems are designed to accurately verify a user’s age and restrict access to age restricted content, offering a more robust defense against underage users and age-related fraud.

Privacy, data protection, and customer trust

Age verification is a compliance control, but it is also a trust event: you are asking a customer to prove something personal at a sensitive moment. If the flow feels invasive—or if your privacy story is vague—customers abandon, regulators scrutinise, and internal teams inherit long-term risk. Reputable systems utilize encryption, data minimization, and secure storage for handling sensitive personal data under GDPR.

Under GDPR principles (as summarised in the European Data Protection Board SME guidance), data should be collected for explicit purposes and minimised to what is necessary and proportionate. This interacts sharply with common age-check implementations: asking for full ID documents when all you need is “18+” is hard to justify under minimisation, especially if you retain images by default.

Biometric processing adds another layer. The UK Information Commissioner’s Office explains that biometric data becomes “special category” data when processed for the purpose of uniquely identifying a person; this purpose-based framing matters because some age estimation designs aim to avoid unique identification and instead output only a threshold result. Privacy-preserving technology often provides a simple Yes/No result regarding age thresholds rather than storing actual birthdates. The EDPB similarly discusses how special-category status depends on purpose and technical processing in its guidance on video devices.

Outside Europe, biometric privacy exposure can be very concrete. In Illinois, the Biometric Information Privacy Act (BIPA) requires, among other things, a publicly available retention schedule and destruction rules tied to the purpose of collection (or a time limit based on last interaction). Even if you don’t operate in Illinois, BIPA has become a global cautionary tale: collect more than you need, retain it too long, and you invite legal and reputational risk.

A privacy-conscious age verification design typically includes:

Clear user messaging (“we only check if you’re over X; we don’t need to know who you are”), strict retention limits, and strong security controls. The Australian trial explicitly flags privacy risk when providers retain full biometric or document data “even when such retention was not required or requested,” and it calls for proportionality. It also describes architectures where biometric samples are processed temporarily and deleted once no longer needed, aligning with minimisation goals. Maintaining user data security is critical for safeguarding sensitive information during the age verification process.

The implementation of age verification systems must balance legal compliance with the protection of user privacy.

Age Verification System Integration

Integrating an age verification system into an online business or platform involves balancing regulatory requirements, technical feasibility, and customer experience. Age verification systems can be implemented using APIs, SDKs, or custom-built solutions, allowing businesses to tailor the integration to their specific needs. It’s important to select a system that is scalable, secure, and compliant with all relevant laws and regulations governing age restricted goods. At the same time, the verification process should be streamlined and user-friendly to minimize customer frustration and reduce user drop off. While integration can be complex, a well-chosen age verification system helps businesses meet their legal obligations, protect minors, and deliver a seamless customer experience.

Choosing the right age verification solution for age restricted products and the path ahead

When choosing an age verification solution, e-commerce teams should evaluate it as both a legal control and a conversion component. You are not just buying “a check.” You are embedding an experience into your highest-value funnel.

At a minimum, teams should pressure-test:

Accuracy around your threshold, how “buffer zones” and escalation are handled, resistance to spoofing, fairness testing and monitoring, integration effort (SDK/API maturity), mobile UX, latency, geographic coverage, and privacy posture (especially retention and whether the system can operate without collecting unnecessary identity data). It is crucial to verify a valid ID and establish a verified identity, particularly to protect young people and young users on social media accounts and other platforms.

When considering legal requirements, it is important to enforce a minimum age threshold and, where applicable, obtain parental consent for minors to ensure compliance with regulations and protect young users.

This is also where solution operating philosophy matters. A system can be “accurate” in a benchmark and still be a business problem if it is slow, brittle, or forces every buyer into ID upload. NIST explicitly positions its age estimation evaluation as a benchmark of algorithms—not a certification of end-to-end age verification—so merchants should validate real-world performance (devices, lighting, demographics, fraud patterns) in their own flows. The complexity of age verification processes can lead to user frustration and user drop off if not managed properly.

Positioned carefully, Agemin fits the modern, layered approach described above: it markets a developer-focused API/SDK, real-time age estimation, and privacy-first architecture, and it explicitly frames its e-commerce approach around low latency, session-aware flows, and avoiding unnecessary ID uploads. Agemin also publishes product claims relevant to buyer evaluation—such as performance metrics, an ISO/27000-series security certification statement, and “zero PII stored” positioning—which compliance and security teams should review in the context of their own DPIA/DTIA obligations and internal risk model.

The direction of travel is unambiguous: enforcement is rising, partner platforms and payment rails continue to harden their category rules, and regulators increasingly expect age assurance that is demonstrably effective—not performative. The merchants who win will treat age verification as infrastructure: measurable, testable, privacy-conscious, and integrated in a way that respects the customer’s time. In this context, balancing age verification with free speech rights is essential, as highlighted by advocacy groups like the Free Speech Coalition, who have raised concerns about the impact of age verification laws on free speech, especially in adult entertainment and controversial online content.

Internationally, governments are taking varied approaches. For example, the Australian government has proposed initiatives to combat identity fraud using facial recognition technology to verify individuals' identities against official records.

The rise of deepfake technology further complicates age verification by making it easier to create convincing fake identities, and the effectiveness of age verification systems can be undermined by the use of fake IDs and other fraudulent technologies.

Clear communication about the age verification process is essential to help manage user expectations and reduce confusion.

Future of Age Verification

The future of age verification is being shaped by rapid advances in technology and evolving regulatory expectations. Artificial intelligence, machine learning, and biometric data are enabling more accurate and efficient age estimation and verification methods, helping to prevent identity theft and underage access to age restricted content. Privacy-preserving solutions, such as age verification tokens and non-intrusive age estimation, are gaining traction as businesses seek to accurately verify age without collecting excessive sensitive personal information. The rise of social media platforms and online gaming is also driving demand for robust age verification systems that can keep pace with new risks and user behaviors. As age verification laws and standards continue to develop, organizations like the Age Verification Providers Association are playing a key role in promoting best practices and industry standards. To stay compliant and protect their users, businesses must remain proactive, adopting the latest age assurance technology and verification methods to meet both regulatory requirements and customer expectations.

Want to learn more?

Explore our other articles and stay up to date with the latest in age verification and compliance.

Browse all articles